Trusting Your Gut? Time to Spot Your Mental Traps

Here Are 7 Workplace Biases And What To Do About Them

Hit the ‘❤️ Like’ because I have finished all the Diwali mithaai and my brain needs a dopamine hit.

So, I have been off the grid for a bit.

Between a chaotic project at work, Diwali festivities, and trying to maintain a semblance of a social life, I am beginning to suspect ‘growing up’ was a mistake.

But I did manage to rewatch Moneyball (read the book!).

You know the story: Oakland A’s, Billy Beane, Brad Pitt being stressed, and Jonah Hill looking like a Big4 Audit Partner.

For decades, baseball scouts operated on the “eye test” - a gut feeling, honed over years, for what looked like talent. Their key metrics were simple: batting average, home runs, a “beautiful swing.”

It was tradition. It felt right.

Then, the nerds came in with their spreadsheets. They argued that unglamorous numbers like ‘on-base percentage’ were far better predictors of the only thing that actually mattered: winning games (or getting on base).

The scouts laughed. How could an algorithm replace artistry? How could data beat decades of intuition?

We look back now, in hindsight, and wonder how they clung so fiercely to numbers that didn’t matter. It seems so obvious, doesn’t it?

But here’s the uncomfortable truth: We are all scouts.

We do it every single day. We cling to a “best practice” just because “it’s how we’ve always done it.” We overvalue a charismatic presenter for competence. And, of course, we let a beautiful deck overshadow the actual data. We have all seen a version of this guy in a meeting.

We see a “beautiful swing,” and our Stone Age brain1 tells us it’s a home run, even when the data says it’s a strikeout.

Just as the scouts’ brains weren’t built to trust spreadsheets, our minds aren’t naturally equipped to spot these subtle-yet-powerful biases under the daily pressure to perform.

We are all clinging to our own “eye tests”... and they are costing us the game.

If you challenge the conventional wisdom, you will find ways to do things much better than they are currently done.

- Michael Lewis, Moneyball

Why We Are All Bad Scouts

These biases are the mental traps that keep us stuck in the dugout, trusting our “eye test” when we should be reading the spreadsheet. They are the hidden mental shortcuts that lead us to draw faulty conclusions from perfectly good data.

I used to think of data as a flashlight in a dark room. But that metaphor is too simple.

Think of it this way: data is a spotlight in a pitch-black warehouse. It illuminates one tiny patch with clarity... while simultaneously convincing you that the other 99% of the room doesn’t matter.

These biases are why we never bother to check the shadows.

Just as the Moneyball ‘nerds’ had to learn to see past batting average, we have to learn to spot these traps in our daily work.

🚨 Quick sidebar: If you want to support my work, here are three ways to help:

Screenwich: This is a small side project with friends: a free tool that makes analysing and valuing stocks a little easier. Sign up now! It’s free.

Desi Mauj: Planning a desi game night? This is another fun side project, built for social games like charades and family feud.

Children’s book: I recently started adapting these mental models for younger readers, simple stories and big questions for curious minds at home or in a classroom. You can buy the book on Amazon!

Seven Traps We All Fall For

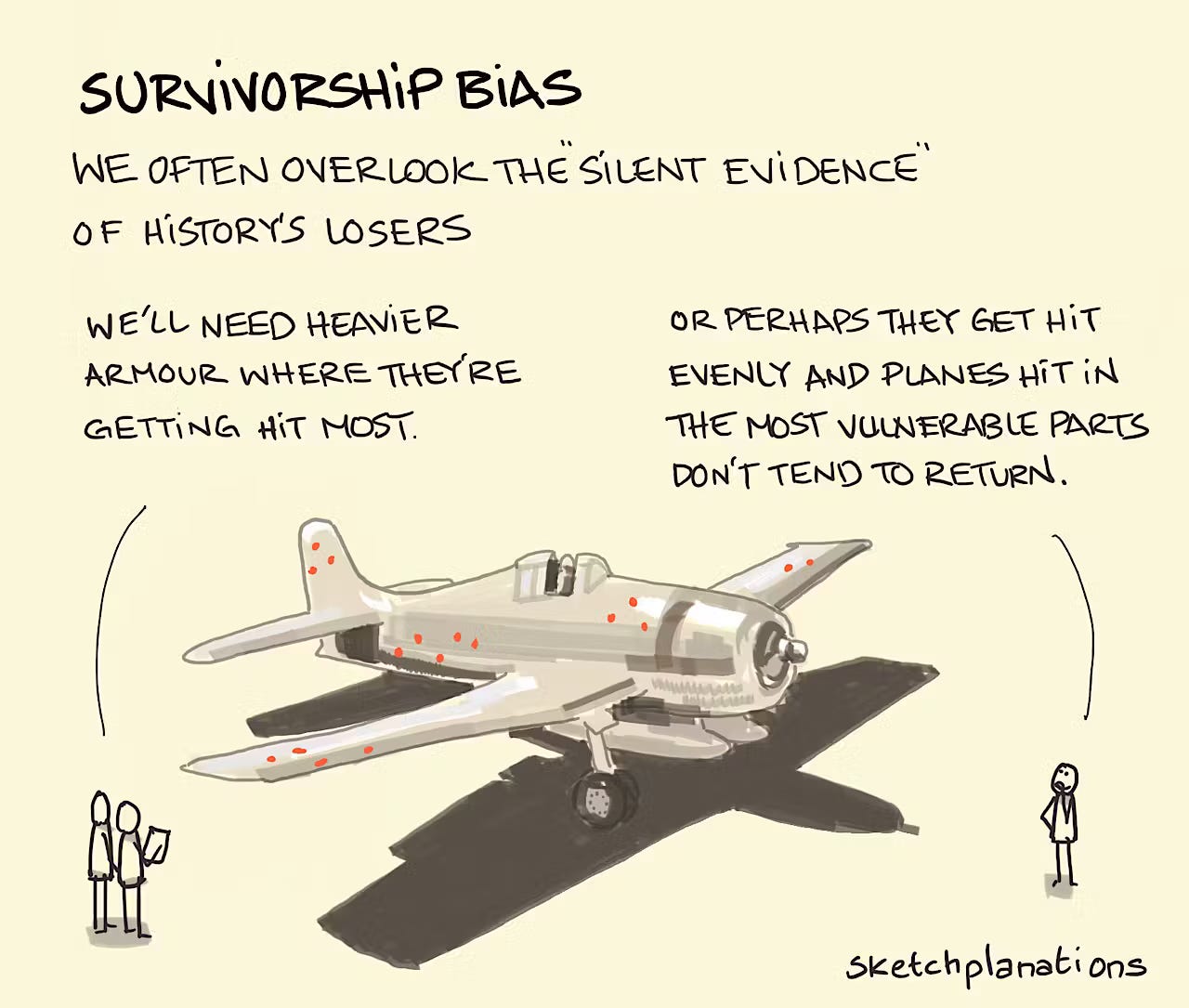

🏆 The Survivor’s Story (Survivorship Bias)

It is our tendency to base our playbook entirely on the “survivors,” while completely ignoring the failures... which is often where the real lessons are buried.

Where You See It: It’s copying the 5 AM routine of a millionaire influencer, without seeing the 10,000 others who tried it and are just... tired. It’s idolising the college-dropout billionaires like Zuckerberg or Gates, forgetting the thousands of dropouts who didn’t build an empire. It’s why we use exit interviews to understand company culture, while ignoring the “surviving” employees who are quiet, miserable, and still clocking in.

The Antidote: Ask: “Where are the bodies?” Study the shut-down projects. When we try to find out if one thing causes another, we only see what happened, not what didn’t happen.

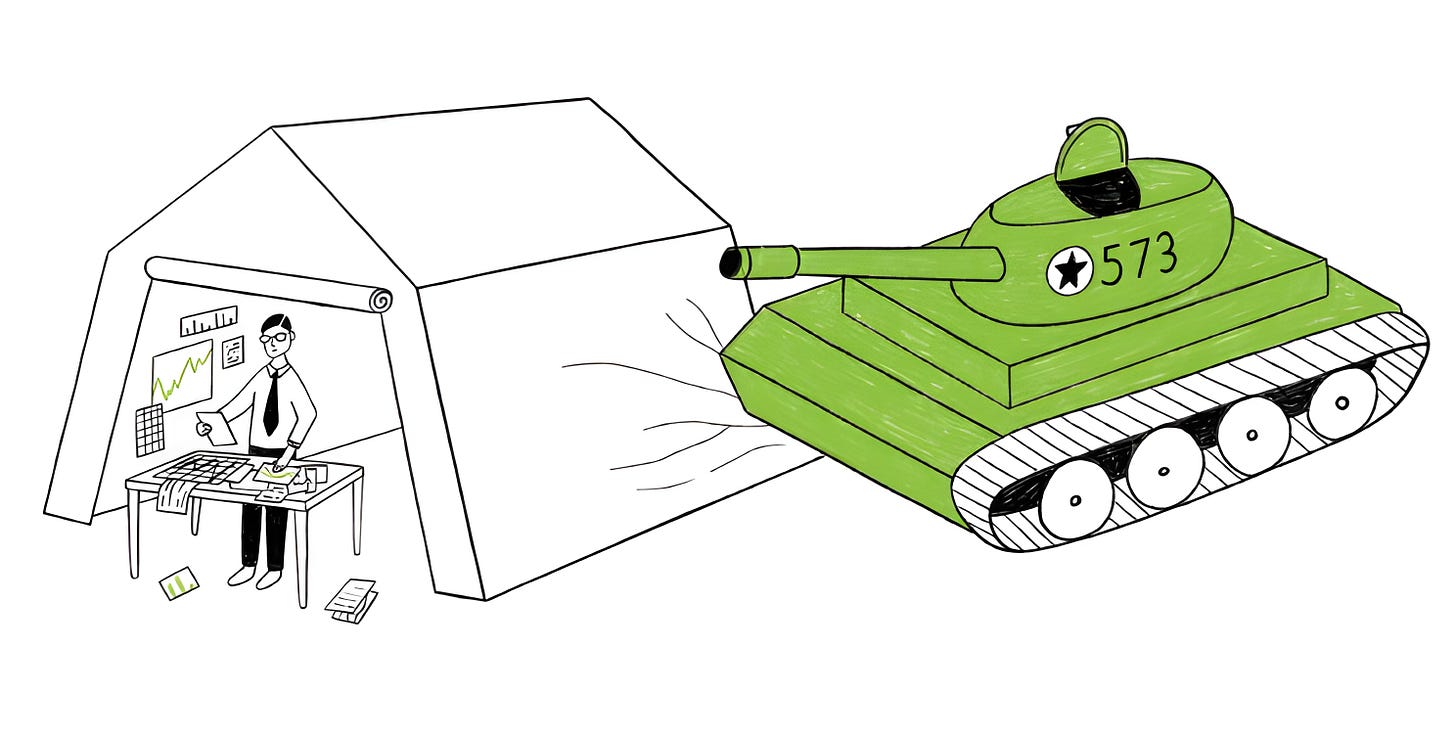

📏 The Measurement Mirage (McNamara Fallacy)

It’s the trap of obsessing over what’s easy to measure (hours logged, emails sent) while ignoring what’s vital to success (team morale, code quality, customer loyalty).

Named after Robert McNamara, who (disastrously) tried to win the Vietnam War with “body counts.”

Where You See It: This was the Moneyball scouts’ core error. They loved the easy metric (batting average) and ignored the important one (on-base percentage). We do this when we judge a developer by “lines of code” or a support team by “tickets closed” while satisfaction drops.

The Antidote: Ask: “What matters here that we aren’t counting?” Pair every key number with a qualitative insight. Go talk to a customer! Not everything that counts can be counted, and not everything that can be counted, counts.

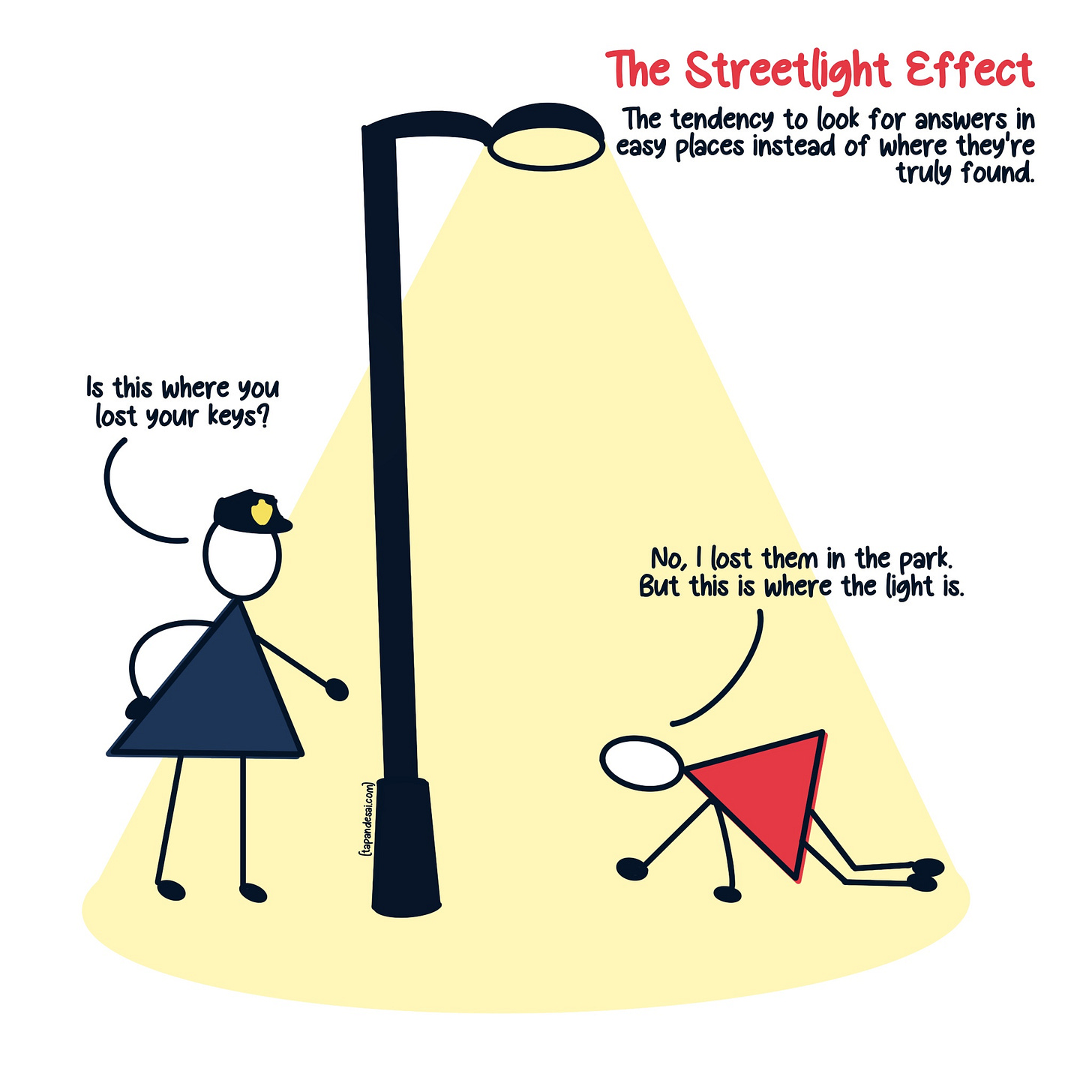

🔦 The Lamppost Search (Streetlight Effect)

The old joke: a man is searching for his keys under a lamppost. A cop asks, “Is that where you lost them?” He replies, “No, but it’s where the light is.” We do this all the time.

Where You See It: Making a major strategy decision using only your internal sales data because it’s clean and available, instead of doing the “dark” and “messy” work of real customer research.

The Antidote: Ask: “Are we looking where it’s easy, or where the answer actually is?” Be painfully honest about your data’s blind spots. Invest in getting the right data, not just the easy data.

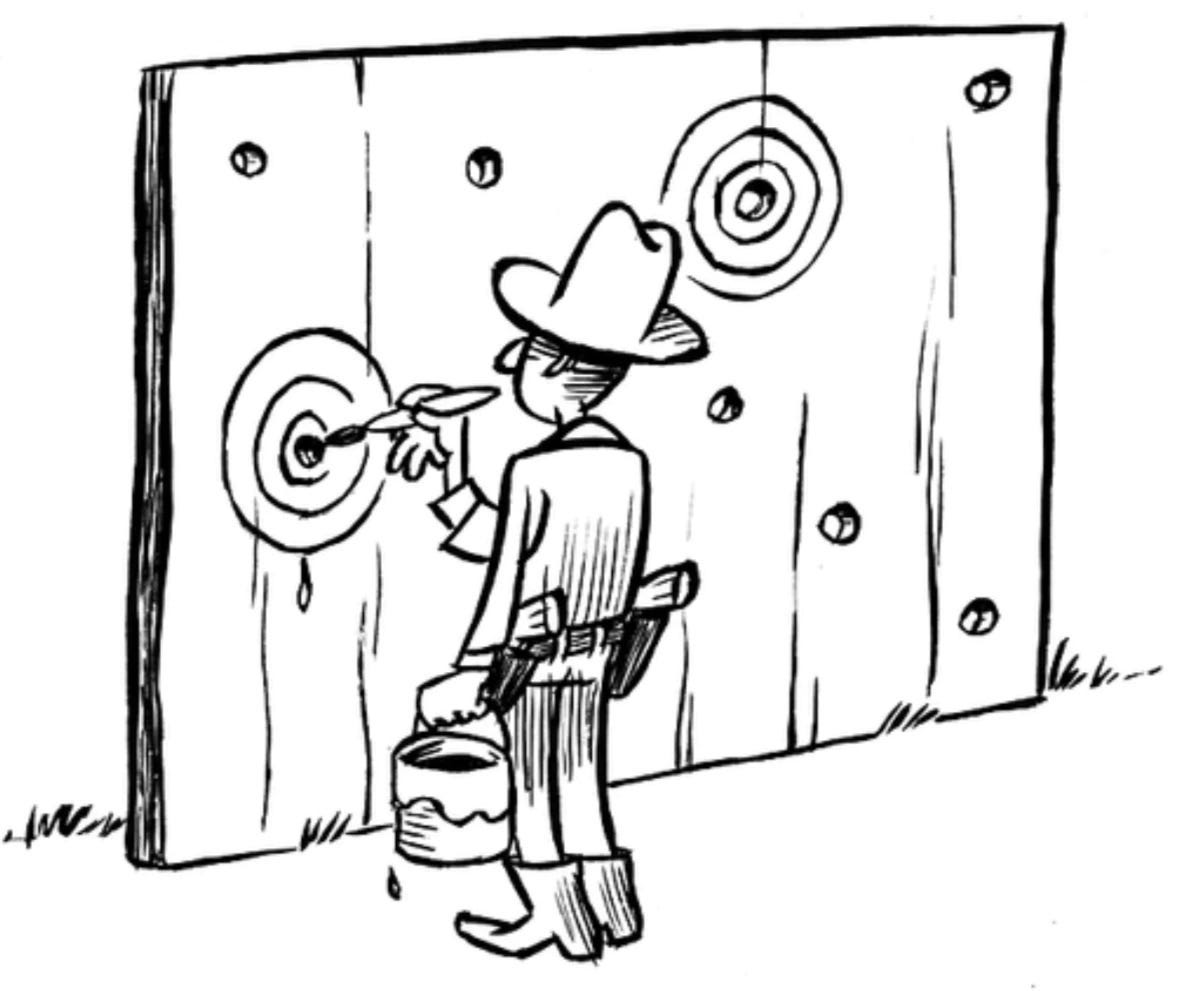

🍒 The Misdirection Trap (Cherry-Picking)

This is the classic “look over here!” misdirection. It’s the art of building a flawless argument by only showing the data that supports it, while conveniently hiding everything that doesn’t.

Where You See It: That sales report highlighting 300% growth in one tiny, new market... while glossing over the 30% decline in your main market. The glowing testimonials on the homepage, carefully curated from the 5% of users who are fans.

The Antidote: Understand “What aren’t we seeing?” Actively hunt for the disconfirming evidence. Make “show me the failures” a standard part of every review.

📖 The Compelling Story (Narrative Bias)

Narrative bias is our brain’s preference for stories over data. This bias is what makes us change an entire business strategy based on one vivid, emotional anecdote.

Where You See It: Pouring the entire budget into a feature because one charismatic salesperson told an amazing story about a client who begged for it, even as data shows 99% of users don’t care. Why investors bet on a company’s story instead of digging into its income statement or balance sheet? It’s why Nike makes you believe greatness starts with three words: Just Do It?

The Antidote: As Daniel Kahneman explains in Thinking, Fast and Slow, we must engage our “slow” analytical brain. Ask: “Is this story representative, or just a great story?” Use anecdotes as illustrations, not as proof.

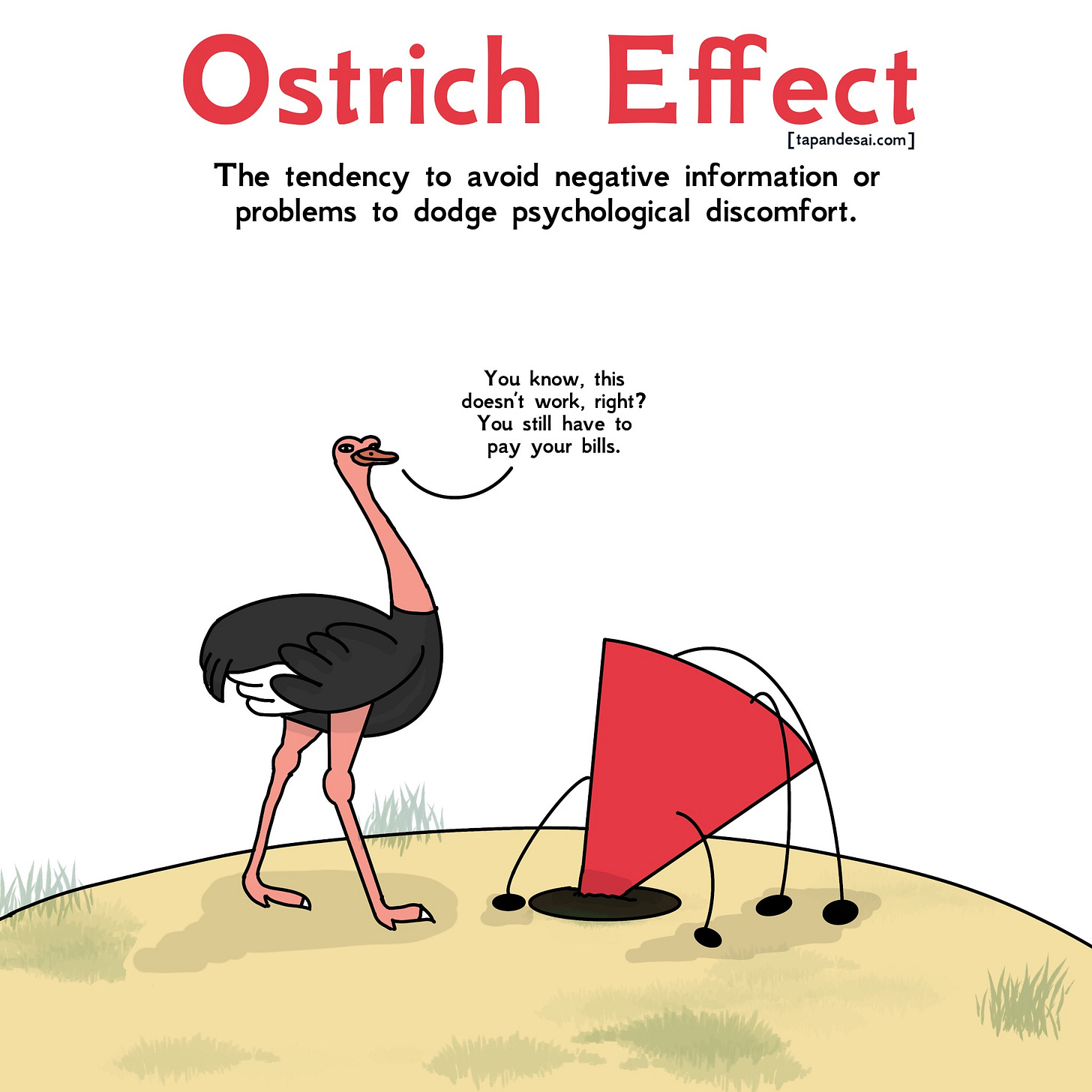

The Head in the Sand (Ostrich Effect)

This is our brain’s “la-la-la, I can’t hear you!” defence mechanism. It’s the active, wilful avoidance of negative (but crucial) information simply because facing reality feels bad.

Where You See It: That “red” metric on the dashboard that everyone just... skips over in the meeting. The negative feedback report sitting in your inbox, unread. Or not wanting to see your credit card balance after a weekend of partying.

The Antidote: Ask: “What uncomfortable truth are we all agreeing to ignore?” Make “reviewing the reds” a mandatory, blameless part of every meeting. Bad data is just a compass pointing you where to fix things.

🎯 The Target Fixation (Goodhart’s Law)

As explained in Super Thinking: “When a measure becomes a target, it ceases to be a good measure.”

The moment you tell a team “your bonus depends on this one number,” they will hit that number... even if it means setting the rest of the business on fire.

Where You See It: Teachers “teaching to the test,” so kids pass but don’t learn. A sales team hitting its “new logo” target by signing up hundreds of low-value, high-churn customers. A content team chasing “clicks” with clickbait, destroying brand trust.

The Antidote: Ask: “Is hitting this number actually getting us what we want?” Use a balanced set of metrics (e.g., pair ‘new users’ with ‘retention rate’). Constantly check if the metric still serves the original goal.

Beyond the ‘Beautiful Swing’

The real lesson from Moneyball isn’t that spreadsheets are smart. It’s that our intuition, left unchecked, is dangerously flawed.

The scouts weren’t bad at their jobs. They were just human, falling for the same traps we do. Billy Beane’s true innovation was asking: “What if our ‘eye test’ is lying to us?”

That’s our job now. It’s easy to find data that confirms our gut. But we need to stop admiring the beautiful swing. Start counting the wins.

If you want to read books that explain similar biases, here are my top recommendations:

Or, subscribe now and get a free copy of Framework for Thought, my complete guide to these biases.

Until next time,

Tapan (Connect with me by replying to this email)

As an Amazon Associate, tapandesai.substack.com earns commission from qualifying purchases.

Our brains were not built for this level of choice overload. Peter Bevelin explains that human evolution began about 4 million years ago, and for most of that time, we were part of hunter-gatherer societies. Agriculture, which radically shifted how we lived, only arrived around 10,000 years ago—practically yesterday on the evolutionary timeline.

Loved reading this, so informative